Let’s talk about cleaning. The most valuable element of a software piece is the function (a.k.a. procedure, method, event, operation…). A function looks like this:

functionX (datatype: input): output

A function is an operation that receives input values and might return some other value. The IPO model is the perfect description for this crucial element. Thousands of lines and discussions might be collected about this simple concept. The clean function seems to evade a conventional definition that let all programmers and theorist happy.

So, this is a proposal. This is a definition proposal based on the pure and concrete analysis of the function structure and hundreds of trial and error efforts.

Take time to evaluate this function (C# or Java code):

IResponse OperationName(IRequest req)

Let’s explain each one:

- IResponse

IResponse might be any interface defined as a response model (data structure) your function returns from the operation. A response model is a simple data structure, a DTO[1] a model (MVC jargon)[2] or a POCO (POJO in Java)—although sometimes a simple hashtable might be enough. [3] It responds to the OCP[4] based on the implementation capabilities of the interfaces. If I want to include some new property to the old IResponse implementation, I only must implement a new interface with those properties. The old clients will behave the same; the new clients will obtain the benefit of the new attributes.

- Operation

An operation is a capability a system has in response of a message of the similar name. The operation Save is the response—the operation—the system executes in response of the message Save from a client or caller. Any system is known by its public interface, encapsulating and hiding all its data or state and its implementation of the operations to strangers.

- IRequest

As the IResponse defines the outputs of the operation, the IRequest defines the data structure of the inputs the client provides. The description of the IResponse and its implications applies to the input data structures.

Treated as the example the function conveys several principles and patterns under the good old philosophy of Unix.[5]

Programming to an interface, not an implementation

Why the input/output pair are defined as abstractions or interfaces? The response is related to a basic principle of object-oriented solutions:

Programming to an interface, not an implementation[6]

But what this principle really means? The principle means that all client request must be issued to an abstraction not a concrete module. See this:

In the above diagram the relative client module calls a service provider via its interface type, not as a class or implementation. There is no new ServerX() call after declaring a server variable but a call to a Factory that return a concrete type of IService. All that means that the call of a function is always a call to the interface of its type, not a direct call to a concrete class. The creational design patterns, like the Factory and Factory Method, are crucial in this module’s relation. The factories are Creators (GRASP, Larman) of the servers.

Therefore, the three elements that conform the IPO function structure are based on abstractions, interface and not concrete implementations, while the initialization problem is fulfilled with factories or abstract parameters. This basic communication structure is also the foundation of many design patterns (state, strategy, abstract factories, prototypes, e.g.) and the clean architecture paradigm.

CQSP (command-query separation principle, B. Meyer)

This principle advocates for the separation of these two types of operations. There are operations that execute some changes in the current state of the system. In a command the system wants a change of its state. It calls an inner method to provoke a change or pass itself as an input to a colleague outside its control that transforms it. On the other side, there are request for data, query operations that return some data structure or a primitive value. Mixing the two operations is a classical violation of this principle.

The principle also constraints the treatment of the input values. In a query the input is an immutable. It serves only as a specification argument for the output structure or value construction. In commands, on the contrary, is the client of the function who wants a change of its state or the state of an object it controls, passing itself or one of its components.

We might consider that the default operation is a command. That’s how the system change and express behavior. The query functions are of several types. The simple calculation operation as in Sum(int a, int b): int, that enclose formulas (mathematical or statistic, physics formulas) are the most common. A similar one is the validator method as in IsActive(): bool or HasData(): bool. In this case there’s no formula but the validation of a state. Other query functions returns primitive values for simple explorations of data as in GetDodFor(Person p): date or GetPlateNumFor(Car c): string. The most complex are the query functions that returns a data structure or object. Those functions call for a structure that the caller doesn’t have. It needs to other to build a usable instance. Those query functions act as factory methods as in MakeCommandFor(IStorable s): PersistentCommand.

The principle indicates then the following possible operations and most typical uses:

Queries

- IResponse QueryXByParameter(IRequest req)

Used for queries based on concrete lookup specifications. The IResponse is defined based on the IRequest values).

- IResponse QueryX()

Typical for the retrieve-all queries or simple accessors of data from strangers. But also, for factory methods to make an instance of a different object than the caller.

Command

- void ExecuteXByParameter(IRequest req)

Most notably, this command is a request to a colleague to change the state of the caller.

- void ExecuteX()

It’s the common private command to request internal changes (the state is reachable to the function). But also, may be a request to a colleague to change based on its current internal state.

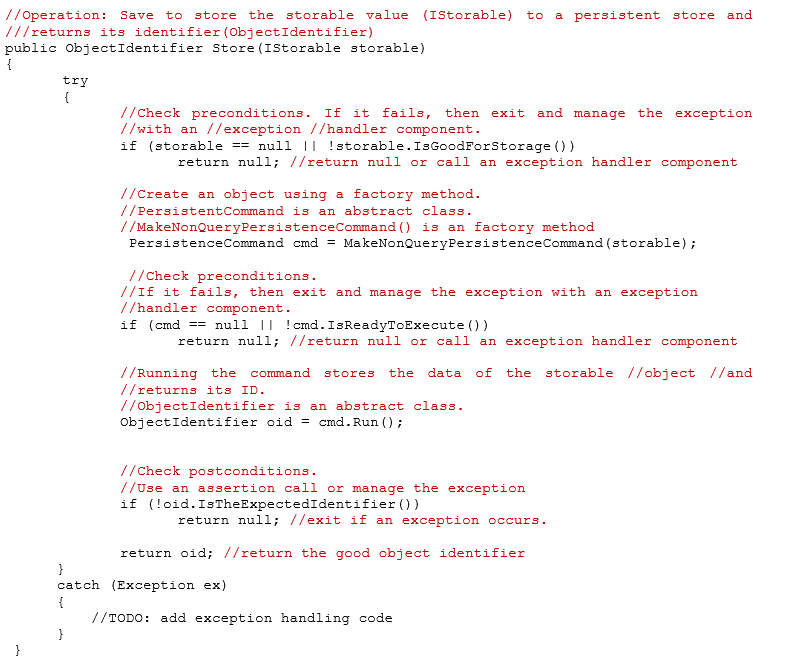

Design by Contract

The Design-by-contract principle was named by Bertrand Meyer[7] to conform a construction principle convenient to functions. The purpose is to evaluate the correctness of a software piece by establishing the constraints of the inputs and outputs. This «software correctness methodology» principle defines the corpus of software module with this structure: preconditions, invariant and post-conditions.

- Preconditions

Preconditions are the input state that should be true before the execution of the operation. If the preconditions aren’t met by the input data, the operation must end immediately with an exception notice to the client or the caller of the service.

- Invariant

The invariant refers to what always must be true for all instances before and after the operation. In the case of a function, the invariant refers to the promise of the immutable state after the function ends. If we follow the CQSP, the operation is designated as a command or a query. In a command, the function changes the state of the input values, but not the state of a third-party module receiving the call, for example. If a caller A to object B modifies A and C, there is a strong dependency between A and C. Most probably the state of C should be part of A, making C a waste.

In a query, the expectation is the same as in a command with the additional obligation to maintain the input as immutable.

- Post-conditions

Post-conditions determines what must be true as the result of the operation. This points to the output of the operation or the change in the state of the system inducted by the invariant. In the first case the post-condition evaluates the good condition of the constructed structure or value. On the other hand, it evaluates the correction of the new state of the system.

The following code simulation use the above principles to show the concept:

What about inner variables?

Functions should use primitive types for loop controls or temporary values for all its internal work, most of the time. For any interaction with the exterior the object should be injected to the function through its inputs. In a query function—or when it uses an internal object to perform some tasks like the example above— the returned module should be defined at the enough abstract level to manipulate or read values. There is not new ConcreteClass()code in the instantiation of those values. You must use factory methods or Factory servers, when the concrete instance implements an interface defined inside another package. The exception to this rule is when the returned value is part of the same module or a part of an implementation family. In this last case, the function that implements the interface of the query in a separate component, will use the concrete instance of its family. The family object is part of a cohesive solution to commands or queries. Still, the variable that point to the concrete instance should be abstract.

Cleansing and Refactoring

There are several refactoring measures you might take to clean your functions. The classical extract method refactoring procedure and its variants allows us to detach a piece of cohesive code to another method or procedure. There is a family of other related mechanisms to cleaning code like this (extracting conditional blocks, switch and for loops, e.g.) and trim the function.

But those defragment measures come with a dependency problem: the old method now depends on the new methods. While we want high-cohesive functions, extracting parts of a bloated procedure will increase the dependencies of the operation to multiple private methods. Is it desirable?

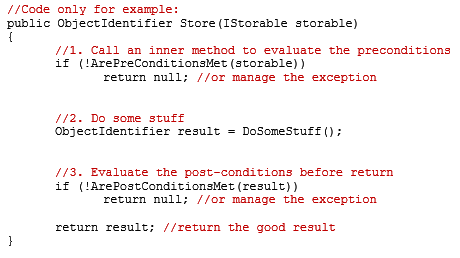

The most common solution is to create a master function or procedure that defines the algorithm to call all the minor private and derived functions. The master function knows about the other procedures. It’s the façade to the clients who will call the operation. The minor procedures then are independent and reusable. This master function is something like the pseudo-code below:

Testability

The previous functions share an important quality: testability. Both functions might be tested by injecting or factoring the concrete objects based on the abstractions. This independence is a core value of all functions that pervades to our modules or classes, and then to our packages or components.

Final words, share your views

The definitions and models described above are only a view of a software implementation problem under any SDLC process. Finding the perfect functions is not a matter of lucky but an effort achieved by adopting the better practices and principles in Computer Science engineering discipline. In the lines above I discuss only several of them, but obviously there are many others you should consider.

A practice based on principles and empirical data that supports them is a professional path that increase your intellectual assets.

What do you think? What is your perfect function? What principles you follow to write this basic founding block in your software pieces?

[1] Data transfer object.

[2] See Model-View-Controller at https://en.wikipedia.org/wiki/Model%E2%80%93view%E2%80%93controller.

[3] Plain-old C# object or Plain-old Java object.

[4] Open-Close Principle (R.C. Martin). The open-close principle that that a software piece should be ready to be extended without any critical modification. See https://en.wikipedia.org/wiki/Open%E2%80%93closed_principle.

[5] About Unix philosophy, see https://en.wikipedia.org/wiki/Unix_philosophy.

[6] See GoF, Design Patterns, Introduction which is the original source. Instructive is: https://www.codeproject.com/Articles/702246/Program-to-Interface-not-Implementation-Beginners

[…] A Clean Function […]

[…] A Clean Function […]